Google’s Ad Experimenting Solutions

Thoughts on the Paving the Path to Proven Success: Google’s playbook experimentation of marketing strategies

According to the iProspect 2020 Global Client Survey, the most important area in marketers’ 2021 roadmaps is the improved integration of brand and performance marketing which holds the promise of more consistent consumer experience, improved measurement, stronger insights, better budget allocation, and, overall, a more efficient marketing effort.

And one of the ways is via experimentation.

A recent Harvard Business Review (HBR) study found that e-commerce companies that consistently conduct marketing experiments instead of taking a “one and done” approach produce 30% higher ad performance in the same year and a 45% increase in performance the year after that.

Google says that at their end they reserve 20-30% of every campaign budget for testing, putting theories and ideas to the test before scaling them, helping to stay ahead of the curve.

And to help other brands to stay ahead of the curve it has released a marketing playbook - Paving the path to proven success: Your playbook on experimentation, a valuable guide on how to start experimentation with your own brand.

Experimenting methodologies and tools

Without wasting any time on the value and fundamental of experimentation, let me straight go to the section that caught my attention in the playbook: Experimenting methodologies and tools.

Google says that it might seem intimidating to have to decide on a testing methodology. However, this simply means identifying how your experiment should be carried out to best understand if your ads are effective in delivering incremental results.

For example, you can run your experiment using user-based, geobased, or time-based partitions to analyze incrementality or optimization.

But once you’ve identified the testing methodology you want to use, it’s just a matter of finding the most suitable corresponding experimentation solution. Here is an overview of the solutions Google offers:

User-based testing for incrementality

These experiments are ad hoc statistical tests to determine the causal impact of media. This is done through randomized controlled experiments to determine whether an ad actually changed consumer behavior, which in turn can be used to determine channel-level budgets or measure lift to optimize future campaigns.

Brand lift (for video)

Is my target audience more aware of my brand after viewing my video ads?

Brand lift uses surveys to measure a viewer’s reaction to the content, message, or product in your video ads. Once your ad campaigns are live, these surveys are shown to the following groups: Exposed and Control groups.

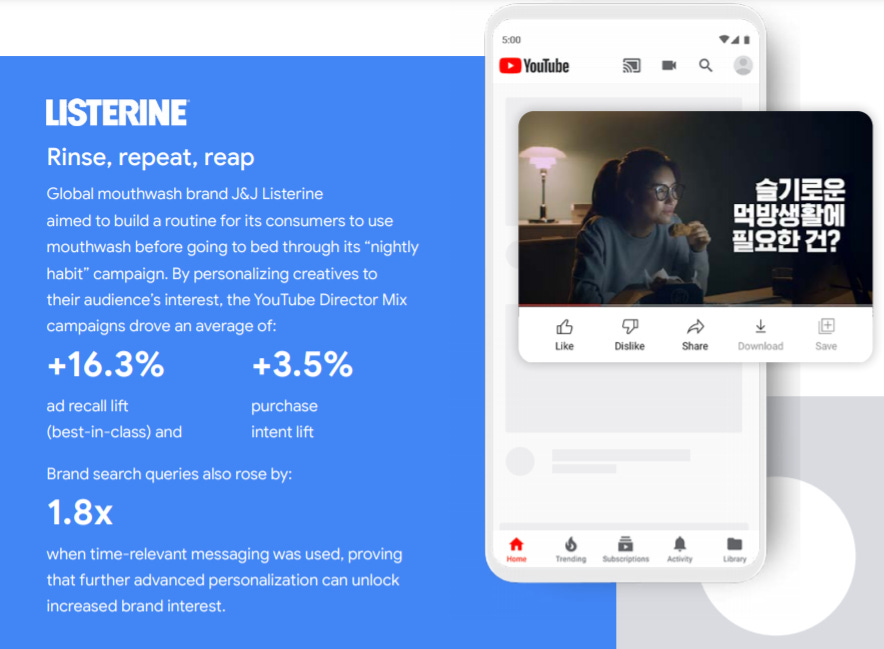

Global mouthwash brand J&J Listerine did a similar exercise and had an ad recall lift by +16.3%.

For best practices, Google recommends selecting only the metric(s) that most closely align with your marketing goals. And use the Video Experiments tool for a clean head-to-head A/B test.

Conversion Lift (for video and display)

A Conversion Lift study measures causal differences in a site visit or conversion behavior.

This is done by dividing users into two groups: Test Group - those who saw your ad and Control Group - users who were eligible to see your ad; but were shown another ad.

The differences in downstream behaviors (e.g., conversions and site visits) of the test and control groups are then tracked and compared.

When it comes to best practices: Google Ads Web Conversion Tracking with gtag.js or Google Tag Manager and avoiding brand-focused creatives that do lack a clear call-to-action.

User-based testing for optimization

The advice from Google is to stay nimble and evolve strategies over time.

As you come up with various ideas, notice how many assumptions you’re making about factors like your audience, your bidding strategy, or your creatives. To understand if your proposed changes will actually help to improve your campaign performance, you can leverage the following experiment solutions available.

Campaign Experiments for Search and Display

Campaign experiments allow you to perform a true A/B test without additional budgets by directing a percentage of your search campaign traffic or display campaign cookies from an existing campaign to a test campaign.

Google says that Optimization Score is a useful guide to prioritizing the strongest opportunities in your campaigns. Check the personalized recommendations that are surfaced so you can boost performance.

With this bidding strategies and targeting new keyword strategies could be done. Wondershare tried bidding strategy and Tafe QLD aimed to measure incremental performance brought on by automated Search.

Testing landing pages and creatives are some of the other things that could be tested. Just like LG Electronics did by enhancing their mobile site speed with AMP, they delivered better user browsing experiences.

And Shakey’s Pizza, a leading pizza restaurant chain in the Philippines, utilized tailored creatives with Responsive Display Ads to drive customers to their online delivery website.

Ad Variations for Search

Google notifies that by honing ad text, you can learn a lot about your users’ preferences and improve your performance.

Ad variations help you test creative variations in search text ads at scale. This tool is best used when you want to test a single change across multiple campaigns or even your entire account. For example, set up an ad variation to compare how well your ads perform if you were to change your call to action from “Buy now” to “Buy today.”

Video experiments for Video

In a world where consumer attention is a scarce commodity, Video Experiments enables you to test different variables of your video campaign head-to-head on YouTube.

A randomized A/B test is created by assigning users to isolated groups (arms) and exposing them to only a single campaign. Performance is then compared across the respective groups with statistical significance.

Google says that with this brands can test different creatives against a single audience segment such as visual language, tuning for audience and building for attention.

L’Oreal wanted to test if sequencing their video ads could help raise top-of-mind awareness and recall for the brand’s Sensational Liquid Matte lipstick.

By delivering the right message at the right moment to the right audience, Video Ad Sequencing unlocked: 2.6x ad recall lift and also sparked 1.3x brand interest compared to the control campaign with no sequencing.

Multiple audience testing can be achieved with single creative just like Downy did. When the fabric softener brand went beyond demographic audiences and used advanced audiences (In-market + Affinity + Life Events), the bumper ads campaign with promotional messaging drove: +23.3% ad recall and +12.9% purchase intent.

Geo-based testing

Geo experiments are another well‑established method designed to measure the casual, incremental effects of ad strategy or spend changes.

Vodafone implemented the Geo-based testing strategy to drive offline store impact and 37GAMES decided to explore the incremental impact of video branding ads as a complement to its app campaigns.

And this is how they performed:

GeoX: (APAC availability in Australia, India and Japan)

GeoX is a platform to design, execute, analyze and interpret controlled experiments, using open source methodologies grounded in robust statistical research.

Google says: “With GeoX, comparable and non-overlapping geographic regions are assigned as control and test groups. This can be done at a national, state, city or even postal code level. When the groups are compared, we can then attribute any uplift in success metrics to the advertising spend that was allocated.”

The tool enables the measurement of both online-to-online and online-to-offline success metrics. There are three main ways you can use GeoX to test for various objectives:

Time-based causal impact analysis

A time-based or pre-post analysis is a statistical methodology to estimate the effect of implemented changes in an experiment. This method essentially leverages specific pre-period information to get tighter confidence intervals and more accurate estimates of the treatment effect.

FlexiSpot, a manufacturer of home office desks, wanted to test whether adding Discovery ads to its existing Search ads could boost quality traffic.

Similarly, Korea’s largest freelance market platform was keen to acquire more users and decided to explore if taking a cross-product approach across Search ads, Discovery ads and YouTube Trueview for Action video ads (leveraging remarketing, customer match and custom intent audiences) could drive conversion growth at scale efficiently.

The cross-channel campaign delivered: 3x conversions and +73% higher ROAS with 2x budgets

With such analyses, it can be difficult to isolate the impact of seasonality and other external factors (known as noise) to tell if changes in success metrics are due to ad intervention alone. Thus these are typically only directionally helpful and rarely statistically significant.

And regardless of the solution, these are the testing best practices that Google advises following:

This is a simple principle that applies to everything from baking to experimenting: the quality of your input determines the quality of the output. To achieve conclusive results, it’s crucial to exercise rigour and keep these factors in mind: